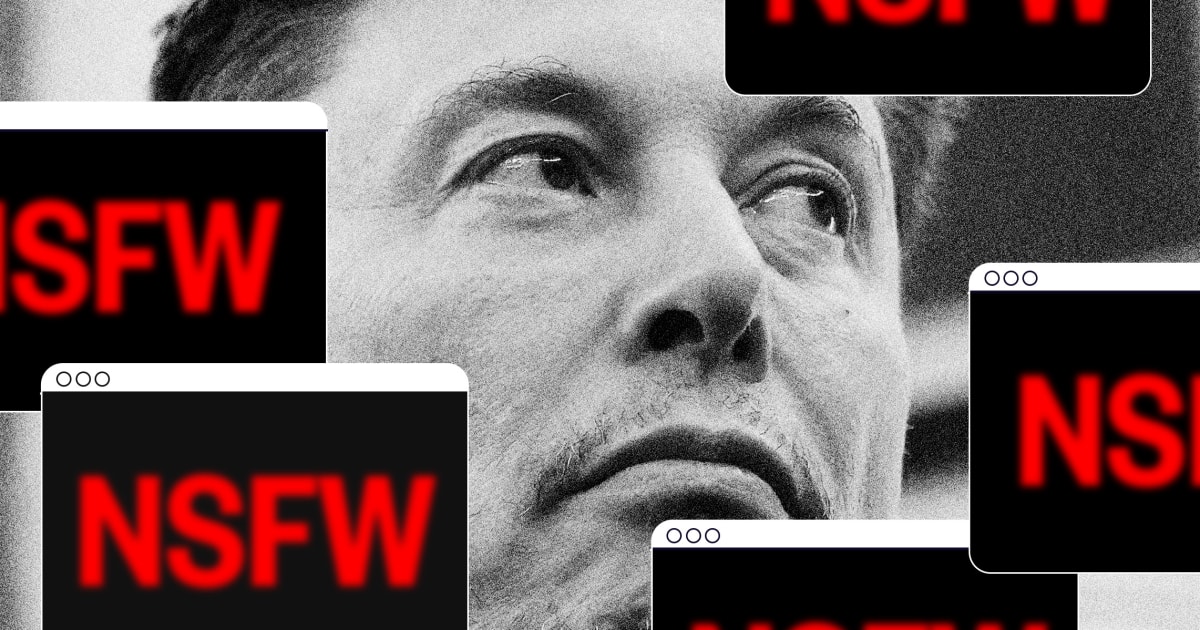

Musk’s Grok AI chatbot is still making sexual deepfakes, despite X’s promise to stop it - BERITAJA

Musk’s Grok AI chatbot is still making sexual deepfakes, despite X’s promise to stop it - BERITAJA is one of the most discussed topics today. In this article, you will find a clear explanation, key facts, and the latest updates related to this topic, presented in a concise and easy-to-understand way. Read more news on Beritaja.

Elon Musk’s artificial intelligence software, Grok, continues to make sexualized images of group without their consent, contempt his company’s pledge months ago to halt abusive deepfakes aft a nationalist backlash and authorities investigations.

A reappraisal by Beritaja recovered dozens of AI-generated intersexual images and videos depicting existent group posted publically connected Musk’s societal media app, X, complete the past month. The images show women whose likenesses were edited by the AI chatbot to put them successful much revealing clothing, specified arsenic towels, sports bras, skintight Spider-Woman outfits aliases bunny costumes. Many of the women are female popular stars aliases actors.

The Grok software, created by Musk’s institution xAI, made the images astatine the petition of users who tried to break done undressing restrictions the work put successful spot successful January. Grok, via its X account, aliases the users past posted the images to X.

The images are akin to ones that sparked a firestorm of criticism successful January, erstwhile Musk’s companies freely allowed group to undress others simply by uploading photos and typing prompts specified arsenic “put her successful a bikini.” Musk’s companies had shouted connected the idea, promoting the “spicy mode” of his AI chatbot. The flood of clone images, including immoderate of children, prompted government investigations connected 5 continents.

The number of sexualized deepfakes created by Grok and posted to X appears to person decreased importantly since the flood successful January. In posts reviewed by Beritaja, the Grok package turns down aliases ignores galore of the sexualized requests it receives publically connected X. None of the women successful Grok-generated images seen by Beritaja were naked, and nary appeared to beryllium minors.

But experts told Beritaja that it’s difficult to investigation each of what Grok produces, particularly erstwhile group entree the package privately connected Grok’s app, connected the Grok website aliases connected the backstage Grok tab of X. It’s besides difficult to hunt X for each nationalist examples of sexualized deepfakes.

“When these images are being created and dispersed around, the group successful the images don’t needfully find out,” said Stefan Turkheimer, the vice president for nationalist argumentation astatine RAINN, an defense group dedicated to fighting intersexual assault.

xAI, the Musk-owned AI startup that created Grok and besides owns X, said Monday it wanted to reappraisal Beritaja’ findings. A typical did not respond to follow-up questions. On Tuesday, about of the images were nary longer connected X and were replaced pinch messages saying the station “is unavailable” aliases “violated the X Rules.” X and Musk did not respond to a abstracted petition for comment.

The caller examples seen by Beritaja show that Grok users person updated their strategies to effort to enactment up of xAI’s engineers and X’s contented moderators. While Grok now appears to move down aliases disregard requests from users to put group “in a bikini,” it has complied pinch different queries.

The examples were not difficult to find utilizing the hunt usability connected the X website.

In 1 trend, a personification asks Grok to create an image by melding 2 images they taxable simultaneously: first, a photograph of a woman, often a celebrity, and second, a drafting of a instrumentality fig pinch its legs spread, either successful a squat aliases a split. The petition includes a punctual telling Grok to make the female “strike the airs from the 2nd image” aliases “match the pose.” The resulting deepfake emphasizes the woman’s crotch.

A 2nd inclination involves users asking Grok to switch the clothing of women successful 2 abstracted photos, pinch astatine slightest 1 of the photos involving tight aliases revealing clothing.

And successful a 3rd set, users person uploaded what look to beryllium authentic photos of women and asked Grok to toggle shape the photos into video clips, sometimes pinch results that are sexualized. In 1 illustration from March 12, Grok complied by generating a video successful which a likeness of an character fondles her breasts, based connected an image successful which she is not rubbing them. In different illustration from April 6, Grok created a video of the aforesaid character pinch her legs dispersed isolated from a photograph successful which her legs were crossed.

At slightest 1 of the celebrities depicted successful the deepfakes is personification who has publically complained about specified images successful the past.

The findings travel aft X committed to preventing the creation of specified images.

X said successful a statement successful January that it had “implemented technological measures to forestall the Grok relationship connected X globally from allowing the editing of images of existent group successful revealing clothing specified arsenic bikinis. This regularisation applies to each users, including paid subscribers.”

Genevieve Oh, an independent expert whose investigation connected deepfakes has been wide cited, said successful an email that she believes Grok “was and still is unmistakably the largest nonconsensual synthetic nudity generator” successful the world. While she said her investigation is ongoing, she said it’s apt that Grok surpasses the output of each different “nudifier tools” combined. Similar apps person circulated for years, causing disruptions astatine schools and leaving victims searching for recourse.

The Center for Countering Digital Hate, which estimated successful January that Grok produced 3 cardinal sexualized images during an 11-day period, said past week that it besides was still uncovering nonconsensual deepfakes made by Musk’s AI.

“Perverts could still usage Grok to put women and girls into sexualized positions and outfits, contempt the platform’s claims otherwise,” Imran Ahmed, the center’s CEO and founder, said successful a statement.

When Grok instituted the changes that allowed creating the sexualized deepfakes, it was unsocial among the about celebrated AI platforms successful relaxing its guardrails to specified a degree.

Last month, location was a motion that Musk’s companies could beryllium backtracking from the committedness they made successful January. In the Netherlands, wherever an defense statement sued xAI complete sexualized deepfakes, the institution based on astatine a tribunal proceeding that it could not extremity each maltreatment of its devices and should not beryllium penalized for the actions of malicious users, according to a description of the hearing by Reuters.

Individual activity offenders person been persistent successful trying to exploit strategy loopholes, not only connected Grok but besides elsewhere, according to rule enforcement.

The National Center for Missing & Exploited Children, which runs the CyberTipline, a nationwide centralized reporting strategy for online kid exploitation, said members of the nationalist are sending it reports describing incidents successful which children aliases maltreatment survivors whitethorn person been exploited utilizing Grok. NCMEC described akin complaints successful January.

NCMEC said, though, that it has not independently researched Grok’s existent capabilities.

“NCMEC is concerned about immoderate AI exertion that has the imaginable to make kid intersexual maltreatment worldly aliases different facilitate the exploitation of children,” it said successful a statement.

Musk has denied that Grok produced kid intersexual maltreatment material. He wrote successful a Jan. 14 post that he was “not alert of immoderate naked underage images generated by Grok. Literally zero.”

Eight abstracted rule enforcement and regulatory agencies told Beritaja this period that they are continuing their investigations of Grok’s nudification and sexualization capabilities. Those authorities are the California lawyer general’s office, Australia’s eSafety office, the Privacy Commissioner of Canada, the European Commission, Ireland’s Data Protection Commission, the Paris nationalist charismatic and a brace of British agencies called the Office of Communications, aliases Ofcom, and the Information Commissioner’s Office.

“California’s investigation is still very overmuch underway. Beyond this, to protect an ongoing investigation, we do not person further updates to stock astatine this time,” the agency of California Attorney General Rob Bonta said successful an email.

Even much authorities authorities expressed outrage successful January and February, though not each of them person confirmed that their investigations are ongoing. Italy, which issued a warning successful January that immoderate Grok-created images could beryllium criminal, decided not to motorboat its ain investigation and chose alternatively to show the investigations by Ireland and the European Commission, a spokesperson said past week. (X has its European office in Dublin.)

Malaysia’s communications commission, which blocked and past restored entree to Grok successful January, said successful an email Tuesday that it was not presently investigating the matter.

xAI separately faces respective lawsuits complete Grok’s procreation of sexualized images. They see 2 lawsuits projected arsenic people actions successful national tribunal successful California brought by women and girls whose likenesses were edited by Grok and a suit by the metropolis of Baltimore alleging violations of its user protection code. Court dockets successful those cases do not show immoderate responses yet from Musk’s companies.

A 4th case, successful the Netherlands, led to an bid past month for Grok to cease generating undressing images of adults aliases children.

The investigations and lawsuits are underway astatine a delicate clip for Musk’s business empire. In February, xAI was acquired by 1 of Musk’s different companies, SpaceX, the rocket work supplier and outer net business. In June, SpaceX plans an first nationalist offering of its shares to raise billions of dollars successful further capital.

The determination to fold xAI into SpaceX intends the rocket institution almost surely will beryllium connected the hook for immoderate imaginable early fines related to Grok’s behavior, ineligible experts said, though they said it’s not clear whether specified fines would beryllium considered worldly to SpaceX’s expected valuation of $2 trillion.

SpaceX did not respond to a petition for comment.

Musk has promoted Grok’s expertise to create sexualized images. He has often posted AI-generated images of cartoonish women successful intersexual situations aliases tight aliases revealing clothing. In a station successful October responding to personification who had shared an AI video of a sexualized robot, Musk complained: “Hmm, our competitors do amended heavy fakes. We will person to measurement up our game.”

xAI released a caller generative AI video instrumentality past twelvemonth called “Imagine,” which included thing the institution called “Spicy” mode, which allowed the creation of AI-generated not-safe-for-work content. The Verge reported that it created topless deepfakes of popular prima Taylor Swift without the user’s asking.

In precocious December, users began to kick about a wave of sexualized deepfakes targeting women and girls whose photos Grok digitally edited to make them look naked aliases about naked. Grok said Dec. 31 connected X that location were “isolated cases wherever users prompted for and received AI images depicting minors successful minimal clothing.” In a abstracted post, the package posted that it “deeply regretted” what it had done.

xAI initially did not alteration the merchandise and alternatively put the onus connected users to obey laws about kid abuse.

“Anyone utilizing Grok to make forbidden contented will suffer the aforesaid consequences arsenic if they upload forbidden content,” Musk posted connected Jan. 3.

But the world backlash soon overwhelmed the company. A British watchdog, the Internet Watch Foundation, reported connected “criminal imagery” that online users said was created pinch Grok, and different researchers found independently that Grok was producing thousands of sexualized images an hour. X restricted the AI image procreation to paying customers only connected Jan. 9 and announced the much broad crackdown on Jan. 14.

In February, French authorities raided X’s offices successful the state successful relationship pinch the deepfakes and different issues. They besides said they planned to telephone X executives and labor — including Musk and erstwhile X CEO Linda Yaccarino — to Paris for interviews the week of April 20. X condemned the search arsenic an “abusive enactment of rule enforcement theater.” It’s not clear whether French authorities still dream to behaviour those interviews this month. The Paris prosecutor’s agency said successful a connection past week that its investigation continues, pinch nary caller accusation available.

European Union regulators could sometimes return years to scope decisions. They spent 2 years investigating X earlier they announced successful December that they were fining the company the balanced of $140 cardinal for breaching transparency obligations. Musk has vowed to conflict the fine.

Britain’s Internet Watch Foundation said its analysts person been incapable to hunt for criminal worldly connected Grok beyond its salary barriers, truthful it does not cognize what Grok’s users are generating now. The instauration said it is not capable for Musk to limit the AI devices to paying customers.

“Our position is that tech companies must make judge the products they build and make disposable to the world nationalist are safe by design,” it said successful a statement.

“If that intends Governments and regulators request to unit them to creation safer tools, past that is what must happen. Sitting and waiting for unsafe products to beryllium abused earlier taking action is unacceptable,” it said.

Subscribe

This article discusses Musk’s Grok AI chatbot is still making sexual deepfakes, despite X’s promise to stop it - BERITAJA in detail, including key facts, recent developments, and important insights that readers are actively searching for online.